A strong fintech disaster recovery strategy is not just about keeping servers online. In financial services, even a short outage can spill into places that hurt fast: failed payments, blocked logins, delayed settlements, broken fraud checks, rising support queues, and tense calls with partners or auditors. That is why disaster recovery sits much closer to business risk in fintech than it does in many other sectors.

There is another reason this topic gets misunderstood. Many teams think backups mean they are covered. They are not. Backups matter, but they answer only one part of the problem. The real question is broader: if a serious incident hits, how quickly can critical services come back, how much data can be lost without damaging the business, who makes the recovery decision, and has the process actually been tested?

That is the point of this article. It turns disaster recovery from a fuzzy technical topic into something leaders can evaluate and improve. We will walk through four essentials: RTO, RPO, failover, and testing. Together, they shape whether your recovery plan holds up when the pressure is real.

What disaster recovery actually means in a fintech environment

Disaster recovery means being able to restore critical systems and data after a major disruption. Not a routine hiccup. Not a minor service blip. A real event that prevents the primary environment from operating safely or normally.

It helps to separate disaster recovery from two nearby concepts that often get mixed together:

- High availability reduces everyday interruptions.

- Backup and restore gives you a way to recover stored data.

- Disaster recovery is the wider capability to restore business operations after a serious incident.

That incident might be a regional cloud outage. It might be ransomware. It might be a bad deployment, data corruption, or a network issue that cuts off a key dependency. The source does not really matter. What matters is whether the business can recover in a controlled way.

In fintech, that challenge is harder because services are tightly connected. A customer payment may touch far more than one app and one database. It can depend on an identity service, a fraud engine, a ledger, a card processor, a notification flow, and internal support tools. So when someone says, “The app is back,” the next question should be, “Can customers actually complete the journey from start to finish?”

That is where many recovery plans fall short. They are built around infrastructure components instead of business-critical paths. Yet customers do not care that compute came back in a second region. They care that they can log in, move money, see the right balance, and trust the result.

A practical disaster recovery plan should reflect that reality. It should be built around services that matter to the business, not around a vague idea of restoring “the environment.”

____________________________________________________________________________________________________

For reference, AWS has useful guidance on recovery patterns in its Disaster Recovery of Workloads on AWS, and NIST covers the planning side well in its Contingency Planning Guide.

____________________________________________________________________________________________________

Start with business impact, not infrastructure

The best recovery strategies begin with impact, not architecture.

That sounds obvious, but many organizations still start by asking technical questions first. Which region should we replicate to? How often should we snapshot? Do we need active-active? Those questions matter, but they come later. First, you need to know what the business is trying to protect.

Start by listing the services that really matter. In fintech, they often include:

- customer login and account access

- payment initiation and authorization

- ledger updates

- fraud monitoring

- settlement and reconciliation

- compliance and reporting functions

Now pressure-test each one with a few simple questions:

- What happens if this service is down?

- How long can the business live with that outage?

- How much recent data can be lost before the damage becomes serious?

- Which other systems must also work for this service to be useful?

That last question is the one teams often miss.

A payment workflow may look like one service on a diagram, but in real life it may depend on several internal and external pieces. If the app is back but the fraud engine is not, or the card processor cannot be reached, the business outcome is still failure. Recovery needs to be judged that way.

This is also why service tiering matters. Not every workload deserves the same recovery treatment.

A simple model often works well:

| Tier | Typical examples | Business tolerance |

| Tier 1 | Payment flows, core authentication, ledger writes | Very low tolerance for downtime or data loss |

| Tier 2 | Ops tools, support portals, internal case management | Moderate tolerance |

| Tier 3 | BI, archives, non-critical reporting | Higher tolerance |

Once this is clear, the rest of the recovery plan gets easier to shape. You stop trying to engineer everything to the same standard. Instead, you focus effort where downtime or bad data would do the most harm.

That is also where cost discipline starts. A recovery design should be strong, but it should also match the business value of the workload.

____________________________________________________________________________________________________

If your team has infrastructure diagrams but no agreed service tiers, that gap is worth fixing early. IT-Magic helps fintech teams review recovery assumptions, cloud architecture, and resilience controls through its AWS security services and consulting work.

____________________________________________________________________________________________________

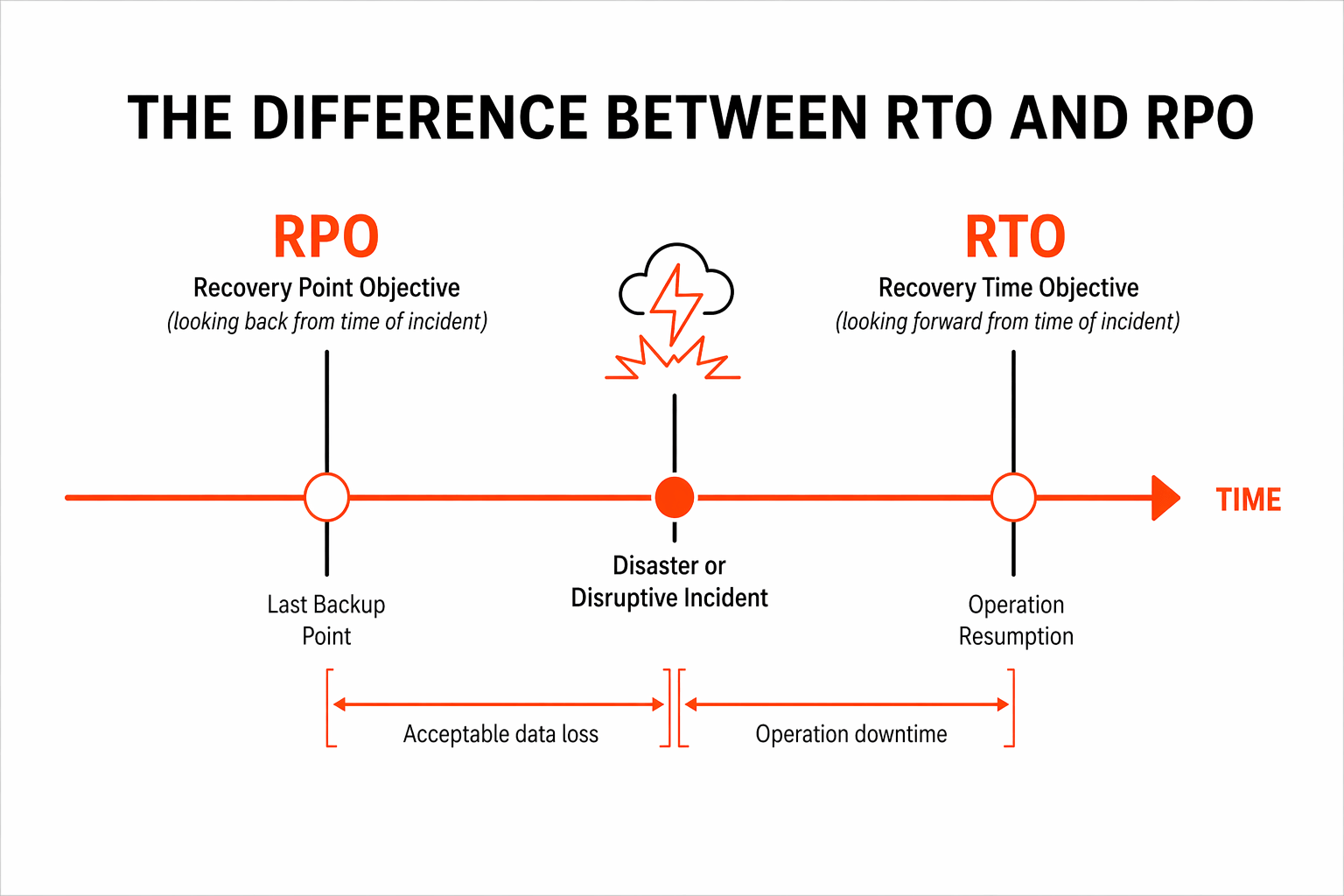

RTO and RPO: the two recovery metrics every fintech team must define

RTO and RPO sit at the heart of any fintech disaster recovery strategy.

They sound technical, but the ideas are straightforward.

RTO, or Recovery Time Objective, is the maximum time a service can be unavailable after an incident.

RPO, or Recovery Point Objective, is the maximum amount of data loss the business can accept, measured in time.

In plain English:

- RTO asks, “How long can this stay down?”

- RPO asks, “How much recent data can we afford to lose?”

These are not abstract metrics. They drive architecture, process, operating cost, and risk.

For example:

- A card authorization service may need a very short RTO and an extremely low RPO.

- A ledger platform may need a strict RPO because correctness matters as much as speed.

- An internal analytics dashboard may tolerate a longer outage and some lag in restored data.

What matters is that these objectives should be defined per service, not for the company as a whole. One blanket number almost always hides the real picture.

Here are a few common mistakes:

- setting RTO and RPO without business owners involved

- copying targets from another company or from a vendor document

- forgetting downstream dependencies

- assuming a provider SLA automatically satisfies recovery needs

- choosing ambitious targets without proving they are achievable

This is where management judgment matters. A very aggressive RTO can force a much more expensive architecture. A loose RPO can save money, but only if the business truly accepts the risk.

That tradeoff should be conscious. Not accidental.

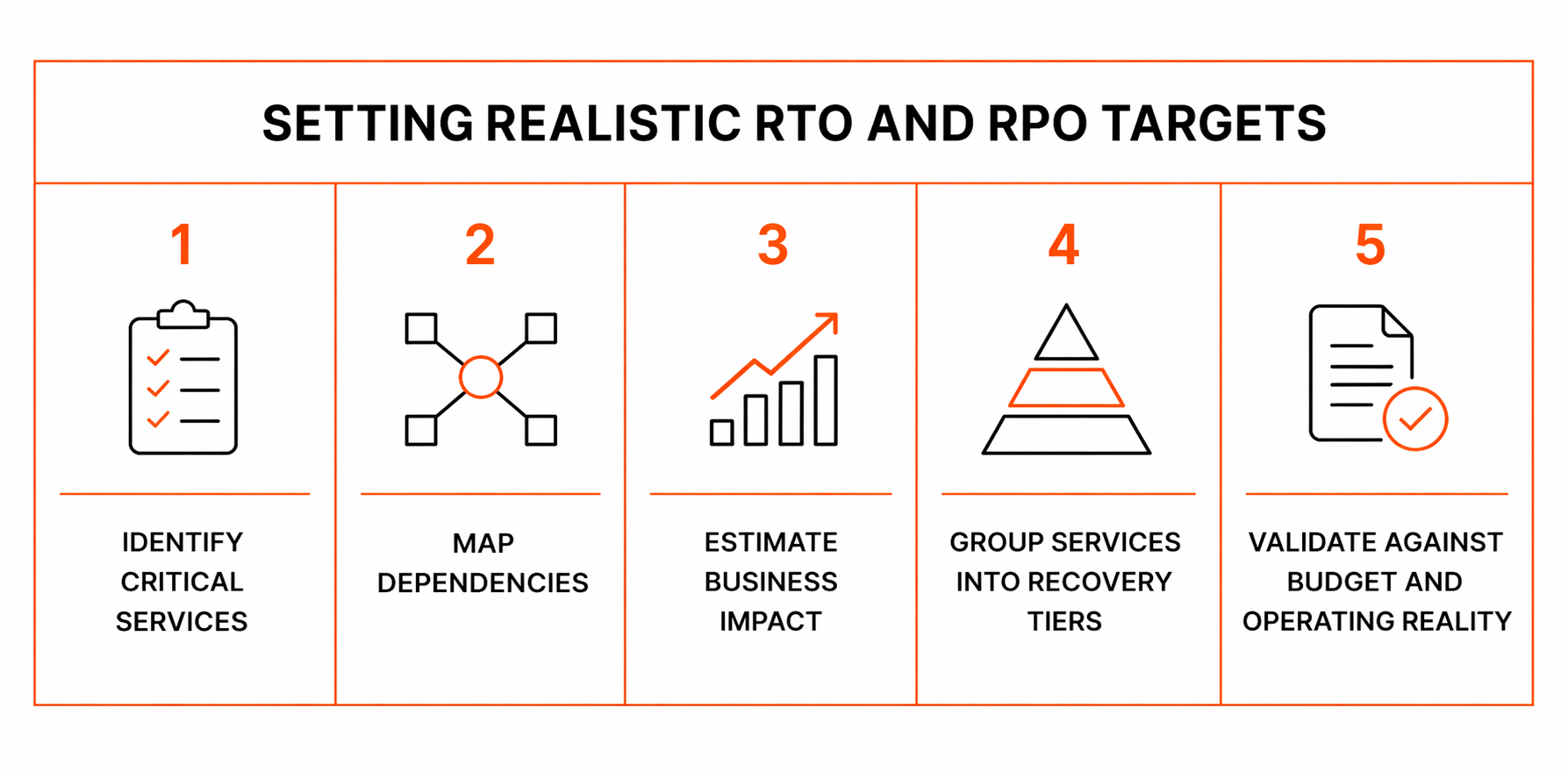

How to set realistic RTO and RPO targets

A useful way to set targets is to work through the process in order.

1. Identify critical services

Name the business services first, not the underlying components. Think in terms of customer and operational outcomes.

2. Map dependencies

List what each service needs to work. That includes databases, queues, secrets, DNS, IAM, third-party APIs, and internal support systems.

3. Estimate business impact

Ask what downtime or recent data loss would cost. The answer may include:

- missed revenue

- support load

- customer trust damage

- regulatory or audit pressure

- partner escalation

- manual recovery effort

4. Group services into recovery tiers

This step stops the “everything is critical” problem. Some services do need a very fast recovery. Others do not.

5. Validate against budget and operating reality

This is where honesty matters. Can the architecture, staffing model, and vendor stack really support the target? If not, the target or the design needs to change.

Here is a practical way to think about tiers:

- Tier 1: customer-facing transaction paths

- Tier 2: support and operational systems

- Tier 3: reporting, BI, and lower-priority internal tools

The important part is to document assumptions.

Maybe the RTO assumes a manual approval by the incident commander. Maybe the RPO depends on a replication lag staying below a threshold. Maybe recovery depends on replaying events from a message queue. Those details matter because they are often where plans break down.

A written target with no assumptions behind it may look neat in a presentation, but it is not much help during a real incident.

Failover strategy: what happens when your primary environment goes down

Failover is the act of shifting workloads to a secondary environment when the primary one is unavailable or no longer safe to trust.

This is where the plan becomes real.

There are four common models, and each comes with its own balance of cost, speed, and complexity.

Backup and restore

This is usually the least expensive option and the slowest to recover.

You keep backups, then rebuild or restore when needed. It can work for lower-priority systems, but it is often too slow for the most critical fintech services.

Pilot light

A small core of the environment stays ready in the recovery location. During an incident, the rest is scaled up.

This is a middle ground. It improves speed without requiring a fully running secondary environment at all times.

Warm standby

A reduced-capacity secondary environment is already running.

This usually offers faster recovery and more confidence than pilot light, since more of the platform is live and tested continuously.

Active-active or multi-site

Multiple environments are live and ready to carry traffic.

This can support the toughest recovery goals, but it is also the most complex. Data consistency, release coordination, traffic routing, and observability all become harder.

The right model depends on four things:

- required RTO

- required RPO

- workload criticality

- available budget and operating maturity

But there is a catch. Failover is not just about moving infrastructure.

A sound fintech failover plan also needs to account for:

- database consistency

- queue draining and event replay

- DNS and traffic routing behavior

- secrets and key management

- third-party provider access

- controls in the secondary environment

- decision-making authority during an incident

And here is one hard truth: a system that comes back fast but creates duplicate transactions or mismatched balances has not really recovered. In fintech, transaction integrity is part of availability.

____________________________________________________________________________________________________

Unsure whether your current AWS design can actually support your stated recovery goals?

That is a good point to bring in an outside review. You can contact IT-Magic for help assessing failover options, resilience tradeoffs, and cloud recovery design.

____________________________________________________________________________________________________

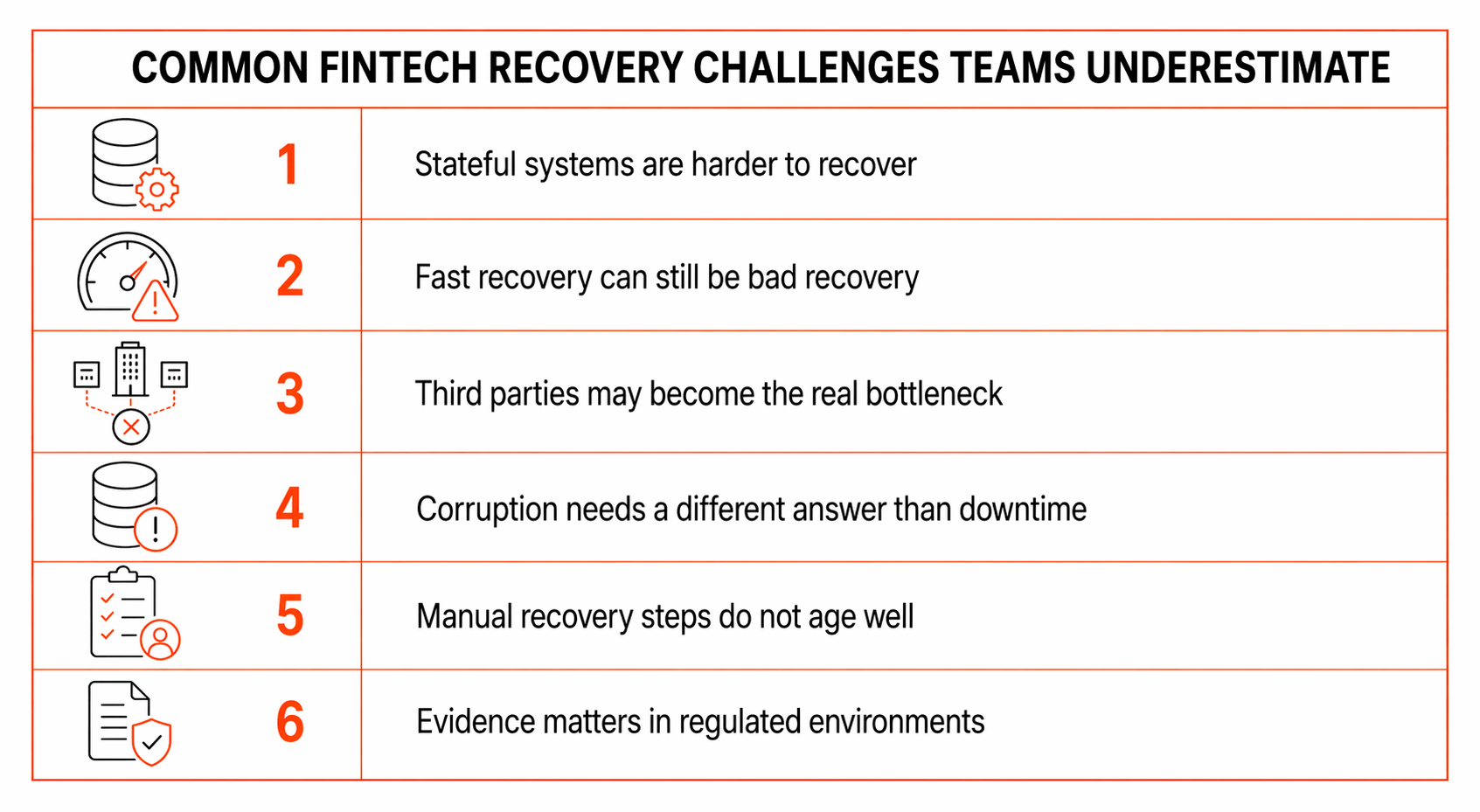

Common fintech recovery challenges teams underestimate

Some recovery problems show up in nearly every environment. Fintech adds a few more.

Stateful systems are harder to recover

Stateless services are often the easy part. Systems of record are not. Ledgers, balances, transaction histories, and reconciliation data all demand extra care.

Fast recovery can still be bad recovery

An application can be back online and still unsafe to use. Missing records, duplicate payments, broken ordering, or stale balances can create more damage than a short outage.

Third parties may become the real bottleneck

Your environment may recover perfectly while a processor, bank integration, KYC provider, or identity service remains unavailable. In practice, that means the business service is still down.

Corruption needs a different answer than downtime

Fast replication helps with availability events. It does not help if bad data gets copied quickly into the recovery environment. That is why point-in-time recovery and validation matter so much.

Manual recovery steps do not age well

If the failover process depends on a few senior engineers remembering tribal knowledge, the plan is fragile. Stress exposes weak documentation fast.

Evidence matters in regulated environments

It is not enough to say you have a plan. You may need to show that it was tested, reviewed, and improved. That makes logs, records, and test outputs more important than many teams expect.

What a practical fintech disaster recovery architecture should include

A good architecture does not need to be flashy. It needs to be clear, repeatable, and matched to business needs.

A practical recovery design should include:

- a clear service inventory

- dependency maps

- approved RTO and RPO targets

- data replication and backup patterns aligned to criticality

- a secondary environment that matches the chosen failover model

- infrastructure as code

- monitoring for both primary and recovery paths

- emergency access controls

- runbooks with owners and escalation rules

- service-specific recovery procedures

- logging and evidence retention

In other words, good recovery design is not just about where systems run. It is about whether people can operate them under pressure.

One more thing: recovery architecture should not live off to the side as a separate concern. It should be part of normal platform design. The more disconnected it is from day-to-day operations, the more likely it is to drift.

The testing checklist: because an untested DR plan is only a document

Testing is where confidence comes from.

It is also where reality shows up.

A plan may look solid in a document and still fail because a certificate expired, a role was missing, a queue behaved differently than expected, or a dependency had never actually been exercised in the recovery path.

That is why good programs test at more than one level:

- backup restore checks

- service recovery drills

- database validation

- failover and failback exercises

- dependency failure simulations

- tabletop sessions for decision-making and communication

This mix matters because disaster recovery is both technical and organizational. You are not only testing systems. You are testing people, handoffs, timing, authority, and clarity.

When a test runs, measure useful things:

- actual recovery time

- actual recovery point

- manual effort required

- hidden dependencies found

- business impact during the exercise

- changes needed afterward

A healthy rule is simple: every test should teach you something.

If a drill produces no lessons, it was probably too easy or too narrow.

Fintech disaster recovery testing checklist

Use this as a working checklist for leadership and engineering reviews.

- Have we identified and ranked critical business services?

- Do we have approved RTO and RPO targets for each critical service?

- Have we mapped infrastructure, data, and third-party dependencies?

- Are backups tested regularly, not just scheduled?

- Can we restore critical databases to a known good state?

- Do we know how to recover from corruption, not only downtime?

- Is the failover process documented step by step?

- Have we tested failover under realistic conditions?

- Have we tested failback to the primary environment?

- Are IAM roles, secrets, certificates, and keys available in recovery scenarios?

- Do monitoring, logging, and alerting work in the recovery environment?

- Are runbooks current and clearly owned?

- Do on-call and incident teams know who makes the failover decision?

- Have external vendors and provider dependencies been included?

- Are test results recorded and reviewed?

- Are disaster recovery assumptions revisited after major changes?

This kind of checklist is useful because it exposes uncertainty quickly. In many cases, the biggest warning sign is not a failed answer. It is “we are not sure.”

How often should fintech teams test disaster recovery?

There is no single schedule that fits every company.

Critical services should be tested more often than low-priority ones, and any major architecture change should trigger new recovery validation. A plan that worked six months ago may no longer reflect the environment running today.

A layered cadence is often more realistic than one giant annual exercise:

- frequent backup restore checks

- periodic tabletop exercises

- scheduled failover drills for the most critical services

- recovery testing after major releases or dependency changes

That approach keeps the plan alive. It also spreads the work across the year instead of turning disaster recovery into a once-a-year compliance ritual.

The point is not to create endless drills. The point is to make sure the recovery plan stays connected to the real platform.

Final thoughts: resilience is built before the crisis

A good fintech disaster recovery strategy is not a single tool, a cloud feature, or a line item in a policy document. It is the result of clear business priorities, sensible RTO and RPO targets, a failover model that fits the service, and regular testing that proves the plan still works.

Perfection is not the goal. Predictable recovery is.

That is what leadership teams really need: fewer assumptions, fewer surprises, and a clearer understanding of what will happen when something goes wrong.

Take action before the next outage forces the issue!

Check recovery gaps, test failover choices, or tighten resilience on AWS with our professional help. A focused review now is much cheaper than learning the hard way during a live incident.

FAQs

What is the difference between RTO and RPO?

RTO is how quickly a service must be restored after an outage. RPO is how much data loss the business can tolerate, measured in time. One defines acceptable downtime. The other defines acceptable data loss. AWS’s disaster recovery guidance is built around designing recovery patterns to meet both objectives.

Does having backups mean we already have disaster recovery?

No. Backups are important, but they are only one part of recovery. A complete program also includes business priorities, recovery targets, restoration procedures, failover design, ownership, and testing. NIST’s contingency planning guidance makes that broader planning model clear.

What failover model is best for fintech?

It depends on the service. Highly critical payment or ledger workloads may justify warm standby or active-active designs. Less critical systems may be fine with backup-and-restore or pilot light. The right choice depends on RTO, RPO, service criticality, and budget. AWS groups these four approaches as the core options for cloud disaster recovery planning.

How often should we test our disaster recovery plan?

Critical services should be tested regularly and after meaningful architecture changes. Many teams combine frequent restore checks, periodic tabletop exercises, and scheduled failover drills for the most important workloads. The exact cadence can differ, but the principle is the same: recovery confidence fades if the plan is not exercised.

What is the biggest mistake teams make with disaster recovery?

One of the biggest mistakes is assuming the plan works because it exists. Others include setting unrealistic RTO and RPO targets, ignoring third-party dependencies, and focusing only on infrastructure instead of business services and data integrity. In fintech, fast recovery without trustworthy transactions is not success.

Should every system have the same recovery target?

No. Recovery targets should reflect business importance. Applying the same RTO and RPO to every workload usually creates unnecessary cost for some systems and insufficient protection for others. Service tiering is almost always a better approach.