TL;DR:

- Cloud performance optimization is a continuous process that aligns architecture with workload demands and goals.

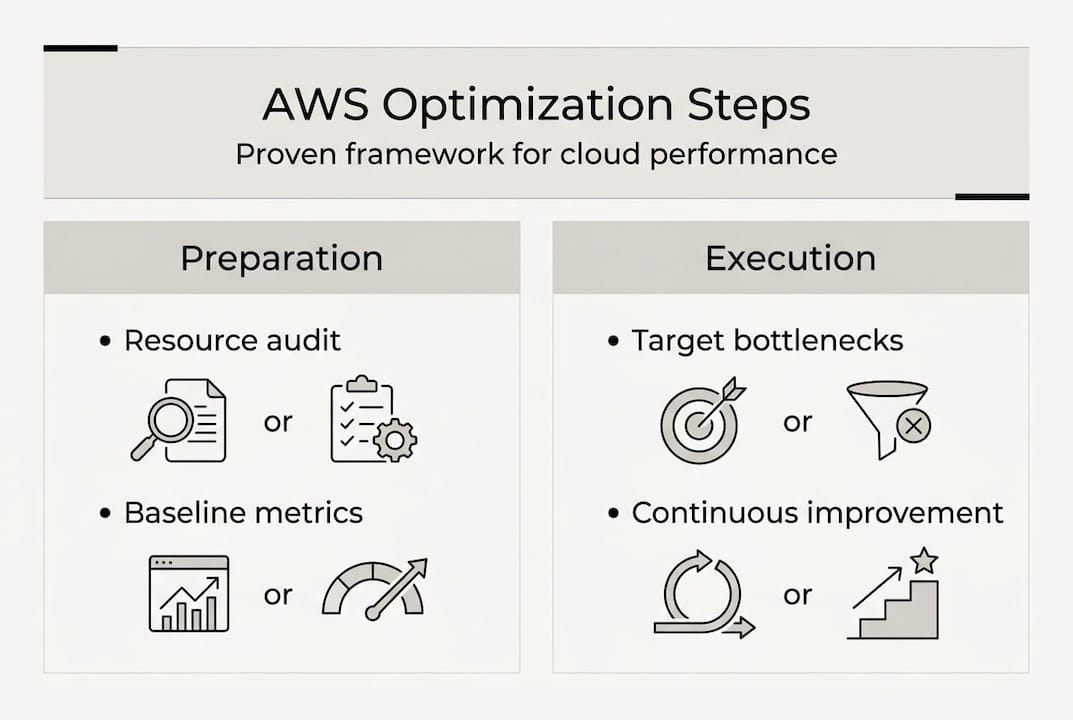

- Preparation involves auditing resources, mapping priorities, and establishing monitoring before making changes.

- Regular benchmarking and automation help sustain improvements while ensuring compliance, especially in fintech.

Your AWS bill just jumped 40%, your latency alerts are firing at 2 a.m., and the engineering team is pointing fingers at each other. Sound familiar? Most cloud performance problems don’t start with a single bad decision. They accumulate quietly through unchecked resource sprawl, mismatched compute choices, and the absence of a repeatable optimization process. For CTOs and engineering leaders at startups, fintech firms, and enterprises, the cost of inaction compounds fast. This guide walks you through a structured, AWS-centric process to systematically improve performance, reduce waste, and stay compliant, without burning out your team.

Table of Contents

- Understanding the AWS cloud performance optimization process

- Preparation: Laying the groundwork for optimization

- Executing the optimization: Step-by-step improvements

- Verification and continuous improvement

- Our take: What most guides miss about cloud performance optimization

- Need expert guidance for AWS cloud performance?

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| Structure drives results | A clear, stepwise optimization process outperforms ad hoc tuning for AWS cloud performance. |

| Preparation prevents waste | Baseline analysis and business alignment avoid rework and costly missteps. |

| Continuous improvement matters | Iterative monitoring and regular reviews keep your AWS environment scalable and compliant. |

| Right-sizing saves money | Matching resources to workloads cuts unnecessary spend without sacrificing speed. |

Understanding the AWS cloud performance optimization process

Cloud performance optimization is not a one-time event. It’s a continuous discipline that aligns your AWS architecture with actual workload demands, business goals, and cost constraints. Done right, it prevents over-provisioning, eliminates bottlenecks, and keeps your system responsive as traffic scales.

The Well-Architected Framework gives engineering leaders a structured lens for evaluating cloud systems. Specifically, the Performance Efficiency Pillar organizes the optimization process into five core areas:

| Focus area | What it covers |

|---|---|

| Architecture selection | Choosing the right compute, storage, and database patterns |

| Compute and hardware | Right-sizing instances, GPU vs. CPU, Graviton adoption |

| Data management | Purpose-built data stores, caching, data tiering |

| Networking and content delivery | Latency reduction, CDN, VPC design |

| Process and culture | Automation, benchmarking, team ownership |

These five areas are not independent checkboxes. They interact. A poor networking decision can undermine a perfectly sized compute layer. A culture that avoids benchmarking will repeat the same mistakes quarter after quarter.

Here’s what optimization actually delivers when done systematically:

- Lower infrastructure costs through right-sizing and reserved capacity planning

- Improved user experience via reduced latency and higher availability

- Regulatory readiness for fintech teams navigating SOC2, PCI DSS, and DORA

- Engineering velocity as automation reduces manual toil

- Predictable scaling during traffic spikes without over-building headroom

“The Performance Efficiency Pillar provides the core methodology for cloud performance optimization, focusing on five areas: architecture selection, compute and hardware, data management, networking and content delivery, and process and culture.”

For engineering leaders, this framework is not just a checklist. It’s a shared language that connects infrastructure decisions to business outcomes, which is exactly what you need when presenting optimization investments to your board.

Preparation: Laying the groundwork for optimization

With a grounded understanding of optimization principles, it’s time to prepare your cloud environment for real change. Skipping this step is where most teams go wrong. They jump to solutions before they understand the problem.

Building a secure AWS cloud foundation starts with knowing what you have. Run a full audit of your AWS resources: EC2 instances, RDS clusters, Lambda functions, S3 buckets, and network configurations. Use AWS Config and AWS Trusted Advisor to surface idle, underutilized, or misconfigured resources. Don’t assume your architecture reflects your intentions. It often doesn’t.

The Performance Engineering guidance from AWS describes a four-pillar process: test-data generation, test observability, test automation, and test reporting. Critically, this process should begin at the design phase, not after you’ve already deployed. Retrofitting performance into a live system costs far more than building it in from the start.

Here’s a practical preparation sequence:

- Audit all active AWS resources and tag them by workload, team, and environment

- Map business priorities to technical requirements (e.g., low latency for payments, high throughput for analytics)

- Identify stakeholders including finance, security, and product owners, not just engineering

- Select baseline monitoring tools and configure dashboards before making any changes

- Document your current performance baselines so you can measure improvement objectively

For tool selection, here’s a quick comparison of what to use and when:

| Tool | Best for | Cost |

|---|---|---|

| AWS CloudWatch | Real-time metrics and alerting | Included / usage-based |

| AWS Compute Optimizer | Right-sizing recommendations | Free |

| AWS Cost Explorer | Spend analysis and forecasting | Free |

| AWS Well-Architected Tool | Structured architecture reviews | Free |

| Datadog / Grafana | Advanced observability and dashboards | Third-party pricing |

Fintech teams building on AWS for retail industry or financial services should also map compliance requirements at this stage. Knowing which workloads touch regulated data shapes every optimization decision downstream.

Pro Tip: Schedule a Well-Architected Review before you start making changes, not after. It surfaces blind spots your team has normalized and gives you a prioritized list of risks to address.

Executing the optimization: Step-by-step improvements

Preparation complete, the next move is targeted action. Here’s how to optimize AWS workloads effectively without creating new problems in the process.

Start with compute. Right-sizing resources is one of the highest-return actions you can take. AWS Compute Optimizer analyzes CloudWatch metrics and recommends instance type changes based on actual usage patterns, not assumptions. Teams routinely find 20 to 40% of their compute is oversized.

Here’s the execution sequence that works in practice:

- Run AWS Compute Optimizer across all EC2 and Lambda workloads

- Implement auto-scaling for variable workloads using target tracking policies

- Evaluate serverless options for event-driven or unpredictable traffic patterns

- Migrate to purpose-built data stores (DynamoDB for key-value, Aurora for relational, Redshift for analytics)

- Apply DevOps automation to enforce consistent configurations across environments

- Integrate FinOps practices to align engineering decisions with financial accountability

When choosing your compute model, the trade-offs matter:

| Approach | Best fit | Trade-off |

|---|---|---|

| Serverless (Lambda) | Event-driven, unpredictable load | Cold starts, execution limits |

| Containers (ECS/EKS) | Microservices, consistent workloads | Operational overhead |

| EC2 (right-sized) | Steady-state, latency-sensitive apps | Manual management required |

| Graviton instances | CPU-intensive workloads | App compatibility testing needed |

One anti-pattern we see constantly: teams use a single relational database for every data access pattern. It’s the wrong tool for most of those jobs. Caching layers like ElastiCache, combined with purpose-built stores, can reduce database load by 60% or more on read-heavy workloads.

For AWS infrastructure for fintech, the stakes are higher. Performance and compliance must move together. A latency improvement that introduces a compliance gap is not an improvement.

Pro Tip: Don’t optimize everything at once. Prioritize the top three bottlenecks by business impact, fix them, measure the result, then move to the next. Sequential wins build team confidence and reduce rollback risk.

Verification and continuous improvement

With optimizations implemented, it’s essential to track results and foster a culture of continuous improvement. Optimization without measurement is just change.

Start by comparing post-optimization metrics against your baselines. Focus on what actually matters to the business:

- p99 latency for user-facing services (not just averages, which hide outliers)

- Error rates across API endpoints and Lambda invocations

- Cost per transaction as a normalized efficiency metric

- Auto-scaling event frequency to detect over or under-provisioning

- Resource utilization rates across EC2, RDS, and ECS clusters

- Time to recovery after incidents, a key reliability indicator

The AWS Well-Architected Framework is explicit about avoiding perception-based decisions. Benchmarking over assumptions is not optional. Teams that rely on gut feel for capacity planning consistently over-provision and overspend.

“Trade-offs in architecture require continuous improvement via DevOps and automation. For fintech, balance scalability with compliance requirements like SOC2 and DORA.”

For teams scaling with AWS, automation is what separates sustainable optimization from a one-time project. Use AWS Systems Manager for patch automation, CloudFormation or Terraform for infrastructure-as-code, and CloudWatch alarms with automated remediation for common failure patterns.

Fintech and enterprise teams face an additional layer of complexity. Applying Well-Architected reviews on a regular cadence ensures that performance improvements don’t inadvertently create compliance gaps. SOC2 and DORA requirements shape how you log, monitor, and respond to incidents, and those requirements should be baked into your optimization process, not bolted on afterward.

Pro Tip: Create a performance runbook for each critical workload. Document the expected behavior, alert thresholds, and remediation steps. When something breaks at 3 a.m., your on-call engineer shouldn’t be guessing.

Our take: What most guides miss about cloud performance optimization

Most optimization guides focus on tools and checklists. What they undervalue is mechanical sympathy, the idea that your infrastructure choices must match the physical reality of how hardware actually works. Graviton instances don’t just save money. They change memory bandwidth and instruction latency in ways that affect application behavior. If your team doesn’t understand that, you’re optimizing blind.

The second gap is stakeholder alignment. We’ve seen technically excellent optimization projects fail because finance didn’t understand the trade-offs and pulled the budget mid-execution. Bring your CFO and compliance lead into the conversation early. Frame optimization as risk reduction and margin improvement, not just engineering hygiene.

Third, don’t treat compliance as a constraint that comes after performance. For fintech teams especially, secure, efficient cloud design means compliance is a design input, not an audit outcome. Teams that build this way move faster, not slower.

Finally, the teams that sustain performance gains are the ones that democratize access to managed services. When every engineer can spin up an Aurora Serverless cluster or a CloudFront distribution without waiting for a platform team, learning accelerates and bottlenecks dissolve.

Pro Tip: Run Well-Architected reviews every quarter, not every year. Architecture drift happens fast, and quarterly reviews catch it before it becomes expensive.

Need expert guidance for AWS cloud performance?

If your team has the intent but not the bandwidth, that’s a common situation for engineering leaders running lean. Optimization at scale requires deep AWS expertise, and the cost of getting it wrong compounds quickly.

At IT-Magic, we’ve delivered AWS infrastructure support across 700+ projects for startups, fintech firms, and enterprises since 2010. Our certified AWS engineers handle right-sizing, auto-scaling, compliance alignment, and continuous monitoring so your team can focus on product. Whether you need AWS cost optimization to cut waste or AWS DevOps services to automate your delivery pipeline, we bring hands-on experience to every engagement. Let’s build a cloud environment that performs and scales without surprises.

Frequently asked questions

What are the first steps in optimizing cloud performance on AWS?

Start by auditing your current environment, establishing baseline metrics, and aligning stakeholders to business priorities. The performance engineering process recommends beginning at the design phase to avoid costly rework later.

Which AWS tools are essential for performance optimization?

AWS Compute Optimizer, CloudWatch, and the Well-Architected Tool are core for ongoing performance, scaling, and cost monitoring. Continuous monitoring and baselines are foundational best practices that these tools directly support.

How does compliance impact performance optimization in fintech?

Fintech teams must balance scalability with strict standards like SOC2 and DORA, so regular benchmarking and architecture reviews are not optional. Compliance requirements shape logging, monitoring, and incident response, all of which affect your optimization decisions.

Why is right-sizing so important in AWS optimization?

Right-sizing ensures resources match real workload needs, preventing excess spend or degraded performance. AWS recommends right-sizing resources as a core best practice because over-provisioned instances are one of the most common and correctable sources of cloud waste.

Recommended

- AWS cost reduction strategies: proven steps for cloud savings

- AWS Cost Optimization: Best Practices, Principles, and Tools

- AWS Automation: Boost Efficiency and Cut Cloud Costs