TL;DR:

- Kubernetes orchestration is a continuous reconciliation engine built on declarative control, not just container scheduling.

- Understanding this distinction transforms how teams design, deploy, and operate scalable, resilient cloud infrastructure.

Most engineering teams think Kubernetes orchestration is glorified container scheduling. That mental model costs you weeks of incident response, botched deployments, and infrastructure that fights you instead of serving you. Kubernetes orchestration is actually a continuous reconciliation engine built on declarative control, and the moment your team internalizes that distinction, everything about how you design, deploy, and operate cloud infrastructure changes. This article breaks down exactly how orchestration works, what makes it powerful for scalable DevOps, and where most enterprises quietly go wrong.

Table of Contents

- Defining Kubernetes orchestration: Beyond container scheduling

- How Kubernetes orchestrates clusters: Control plane, nodes, and scheduling

- The reconciliation loop: Automated rollout and update of workloads

- Networking orchestration: Service endpoints, Gateway, and Ingress

- Kubernetes orchestration vs. workflow orchestration: Avoiding common confusion

- The uncomfortable truth most experts won’t tell you about Kubernetes orchestration

- Take the next step: Kubernetes orchestration support for your team

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| Declarative control | Kubernetes orchestration relies on a declarative model where you specify the desired state and the system reconciles it automatically. |

| Automated workload updates | Controllers enable progressive rollouts and instant rollbacks, reducing risk and downtime for enterprise applications. |

| Stable networking abstractions | Service endpoints and Gateways automate and secure traffic routing, supporting scalable microservices architectures. |

| Extensible scheduling | Enterprise-specific orchestration logic is possible with plugin-based scheduler extensions. |

| Avoid architecture confusion | Kubernetes orchestrates workloads, not general workflows—integrate properly with workflow engines to prevent mistakes. |

Defining Kubernetes orchestration: Beyond container scheduling

The word “orchestration” sounds musical, coordinated, even gentle. In practice, Kubernetes orchestration is more like a relentless control loop that never sleeps. You tell Kubernetes what the world should look like, and it never stops trying to make that true.

“Kubernetes orchestration is the mechanism by which Kubernetes continuously drives the cluster toward a user-declared desired state, rather than requiring you to prescribe step-by-step workflows.” Kubernetes overview

That single sentence separates Kubernetes from every traditional deployment tool your team may have used before. You are not writing scripts that say “start container A, wait, then start container B.” You are writing a declaration of intent, and Kubernetes figures out the how.

The real architecture underneath this is what makes it enterprise-grade. Kubernetes operates as a distributed scheduler combined with a declarative control plane and a reconciliation engine working in concert. Each of these components has a specific role:

- Declarative control plane: Stores and enforces the desired state of every resource in the cluster

- Distributed scheduler: Matches workload requirements to node capacity across the entire cluster

- Reconciliation engine: Continuously compares actual state to desired state and acts on any difference

The most common mistake CTOs make is treating Kubernetes like a traditional workflow engine. They expect it to follow ordered steps. Instead, it operates reactively and continuously. If a node fails at 3 AM, Kubernetes does not wait for an on-call engineer. It detects the discrepancy and begins correcting it immediately.

If you are evaluating container orchestration platforms and comparing your options, an ECS vs EKS comparison is a useful starting point. And if you are ready to move forward with Kubernetes specifically, our Kubernetes setup guide covers the practical configuration steps in detail.

How Kubernetes orchestrates clusters: Control plane, nodes, and scheduling

Understanding the mechanism at a conceptual level is one thing. Knowing how it actually works in a production cluster is another. Let’s walk through the architecture in concrete terms.

The control plane handles global decision-making, responding to events across the cluster and maintaining the authoritative record of desired state. The worker nodes execute workloads and report their actual state back to the control plane. This separation is intentional. It means your scheduling logic and your execution environment are decoupled, which is exactly what you need for fault tolerance at scale.

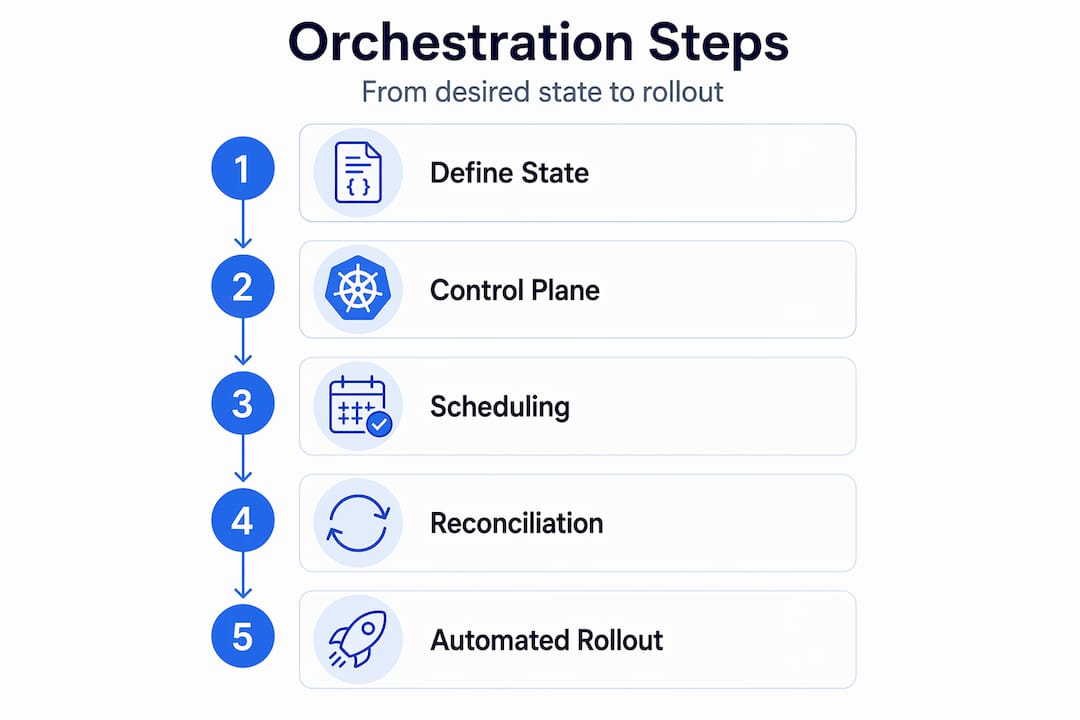

Here is how the orchestration sequence works in practice:

- You declare desired state by applying a YAML manifest (a Deployment, StatefulSet, or DaemonSet) to the API server

- The API server validates and stores that manifest in etcd, the distributed key-value store that is Kubernetes’ source of truth

- The scheduler watches for unbound Pods and evaluates all available nodes based on resource requests, taints, tolerations, and affinity rules

- The scheduler assigns the Pod to the best-fit node by writing a binding to the API server

- The kubelet on that node pulls the assignment, starts the container runtime, and reports status back

- Controllers continuously watch for any deviation and react accordingly

| Component | Role | Failure impact |

|---|---|---|

| API server | Central communication hub | Cluster management stops; workloads continue |

| etcd | Desired state storage | Cluster state loss; critical |

| Scheduler | Pod-to-node assignment | New Pods stay pending |

| Controller manager | Reconciliation loops | State drift goes uncorrected |

| Kubelet | Node-level execution | That node’s Pods fail |

The scheduling logic itself is far more sophisticated than simple bin-packing. The Kubernetes scheduling framework is a plugin-based architecture where each scheduling decision passes through multiple extension points: filtering, scoring, reserving, and binding. Your team can inject custom logic at any of these stages. For example, a fintech team might write a plugin that ensures payment-processing Pods always land on nodes with specific compliance certifications.

Pro Tip: Do not customize the scheduler until you have exhausted built-in mechanisms like node affinity, Pod topology spread constraints, and resource quotas. Custom plugins add operational complexity that can turn a minor upgrade into a multi-week project.

For teams working specifically on scaling containers on AWS, or those undertaking an AWS migration for high loads, the scheduler’s behavior under stress is one of the first things to validate during load testing.

The reconciliation loop: Automated rollout and update of workloads

The reconciliation loop is where Kubernetes earns its reputation for resilience. It is also where teams that treat Kubernetes as a deployment script rather than a control system run into serious problems.

Kubernetes orchestrates workload rollouts via controllers that continuously reconcile desired versus actual state at a controlled rate. The Deployment controller is the most commonly used example. When you update a container image, the controller does not simply kill all old Pods and start new ones. It creates a new ReplicaSet, gradually scales it up while scaling down the old one, and monitors health probes at every step.

| Update approach | Execution | Rollback speed | Risk level |

|---|---|---|---|

| Manual scripted | Sequential, operator-driven | Slow, error-prone | High |

| Imperative "kubectl` commands | Direct, stateless | Manual | Medium |

| Declarative Kubernetes rollout | Automated, state-driven | Instant (kubectl rollout undo) |

Low |

The practical difference is enormous. With manual or scripted approaches, a failed deployment at step 7 of 12 might leave your cluster in an indeterminate state. With Kubernetes declarative rollouts, the cluster always has a coherent desired state to work toward, and rollback is a single command that re-points the controller at the previous ReplicaSet.

Key behaviors to leverage during rollouts:

maxUnavailable: Controls how many old Pods can be removed before new ones are readymaxSurge: Controls how many extra Pods can exist above your desired replica count during the transitionprogressDeadlineSeconds: Triggers automatic rollback if a rollout stalls beyond a time threshold- Readiness probes: Gate traffic routing so a Pod only receives requests once it is truly ready

Pro Tip: Always define both readinessProbe and livenessProbe in your Pod spec. Without them, Kubernetes assumes a container is healthy the moment it starts, which means traffic can reach a Pod before your application is initialized. This is one of the most common causes of transient 502 errors during rolling updates.

A thorough application rollout checklist is worth building into your team’s deployment pipeline as a standard gate. For businesses running AWS ecommerce orchestration or handling high loads, automated rollouts with properly tuned surge and unavailability parameters directly translate to uptime metrics and customer satisfaction.

Networking orchestration: Service endpoints, Gateway, and Ingress

Container orchestration without networking orchestration is incomplete. Kubernetes solves a fundamental problem in microservices architectures: backend Pods are ephemeral and their IP addresses change constantly. How do you route traffic reliably to something that keeps moving?

The answer is the Service API. Kubernetes networking orchestration covers stable Service endpoints and external access via Gateway and Ingress APIs. A Service object provides a stable IP address and DNS name that persists regardless of how many times the underlying Pods are replaced or rescheduled.

Under the hood, the service proxy (typically kube-proxy or a modern eBPF-based replacement) maintains routing rules using EndpointSlice objects. As Pods come and go, the EndpointSlice is updated and the proxy rules follow automatically. From the perspective of any client inside or outside the cluster, the Service endpoint never changes.

“The Service API provides a stable, long-lived IP and hostname for a set of changing backend Pods, abstracting away the churn of container lifecycle events.” Kubernetes Services and Networking

For external traffic, Kubernetes offers two primary abstractions:

- Ingress: An older but widely deployed API that defines HTTP/HTTPS routing rules, TLS termination, and path-based traffic splitting to backend Services

- Gateway API: A newer, more expressive API that supports multiple traffic protocols, role-based configuration (infrastructure providers versus application developers), and extensible routing policies

The Gateway API is rapidly becoming the preferred approach for complex routing scenarios, especially in organizations running many microservices with distinct traffic policies. For businesses building AWS retail infrastructure or implementing cloud-native payment infrastructure, reliable and secure external routing is not optional. A misconfigured Ingress can expose internal services or break SSL termination in ways that create both security and compliance risks.

Kubernetes orchestration vs. workflow orchestration: Avoiding common confusion

Here is a mistake we see regularly, even among experienced engineering teams. Someone reads about “orchestration,” gets excited about Kubernetes, and starts trying to use it to manage data pipelines, ETL jobs, or multi-step business workflows. That is the wrong tool for the job, and the architecture suffers for it.

Kubernetes is primarily for container and workload orchestration, not for general DAG (directed acyclic graph) workflow execution. Tools designed for workflow orchestration handle a fundamentally different problem: sequencing tasks with complex dependencies, managing retries at the task level, tracking lineage, and providing visibility into multi-step pipelines.

The distinction matters in practice:

- Kubernetes manages where and how containers run, ensuring they stay alive and receive traffic

- Workflow engines manage what happens when, coordinating the logical sequence of operations within a business process or data pipeline

- Running an Airflow DAG on Kubernetes (using the KubernetesExecutor) is a valid and popular pattern; replacing Airflow with Kubernetes is an architectural mistake

- Kubernetes Jobs and CronJobs handle simple batch tasks, but they lack the dependency management, observability, and retry semantics that dedicated workflow tools provide

Best practices for integrating both:

- Use Kubernetes as the execution substrate (every task runs in a container on a managed cluster)

- Use a workflow engine for orchestrating the logical sequence and dependencies between tasks

- Keep infrastructure orchestration (Kubernetes) and workflow orchestration (Airflow, Prefect, or similar) as separate concerns with clean interfaces between them

Confusing these two layers leads to brittle architectures where teams try to encode business logic into Kubernetes manifests or use workflow DAGs to manage infrastructure that should be self-healing. For teams evaluating cloud platform options, a comparison of AWS competitors for retail AI shows how platform choice affects both workload and workflow orchestration capabilities.

The uncomfortable truth most experts won’t tell you about Kubernetes orchestration

After delivering over 700 projects since 2010, we have seen a consistent pattern. Engineering teams adopt Kubernetes with genuine enthusiasm, master the basics quickly, and then plateau. They run containers successfully. They do rolling updates. But they never fully capture the operational guarantees that Kubernetes was designed to provide.

The reason is almost always the same: the team learned how to use Kubernetes without internalizing what Kubernetes is doing. They treat the reconciliation loop as background magic rather than a first-class operational primitive. They write imperative scripts that fight the declarative model. They add custom scheduler plugins because the default behavior was not fully understood, not because the business genuinely needed them.

The reconciliation loop is transformative only when you let it work. That means defining accurate readiness and liveness probes. It means setting resource requests and limits that reflect actual workload behavior. It means trusting the controller to manage rollouts rather than scripting around it.

Overcomplexity is the silent killer in Kubernetes adoption. We have inherited clusters with 15 custom admission webhooks, 6 different CNI plugins, and scheduling configurations that nobody on the current team understands. Those clusters are not scalable. They are fragile. Every upgrade becomes a negotiation with technical debt.

Our advice to every CTO considering Kubernetes maturity investments: start with the simplest configuration that meets your business requirements. Add complexity only when a specific, measurable business need demands it. The scheduler extensibility is powerful, but it is a power you should earn through demonstrated necessity, not adopt preemptively.

The teams that get the most out of Kubernetes are the ones who deeply understand the control plane, trust the reconciliation model, and resist the temptation to customize everything. Our Kubernetes support specialists work with engineering leaders to build that operational maturity systematically rather than by trial and error.

Take the next step: Kubernetes orchestration support for your team

Translating the principles in this article into production-grade infrastructure requires more than documentation. It requires experience with the failure modes, the upgrade paths, and the specific constraints of your business context.

At IT-Magic, we have helped fintech, retail, and enterprise engineering teams move from fragile container deployments to resilient, declaratively managed Kubernetes infrastructure on AWS. Whether you are evaluating EKS for the first time or untangling a cluster that has accumulated years of operational debt, our Kubernetes support solutions are built around your specific workload and compliance requirements. For financial services teams with strict regulatory constraints, our AWS for fintech practice covers PCI DSS-aligned orchestration architectures that your compliance team will actually approve.

Frequently asked questions

How does Kubernetes orchestration improve uptime?

Kubernetes continuously reconciles current state to the declared desired state using independent control loops, so when a node fails or a Pod crashes, the system self-corrects without manual intervention.

Can Kubernetes orchestration be customized for enterprise needs?

Yes, the plugin-based Scheduling Framework allows teams to inject custom logic at multiple points in the scheduling pipeline, enabling business-specific node selection, compliance constraints, and resource policies.

Is Kubernetes orchestration suitable for workflow engines like Airflow?

No. Kubernetes handles container and workload orchestration, not DAG-based workflow execution. Use Kubernetes as the execution platform for workflow tasks, but keep a dedicated workflow tool for managing task dependencies and sequencing.

How does orchestration handle live updates and rollbacks?

The Deployment controller changes actual state to desired state at a controlled rate, managing ReplicaSets progressively and supporting instant rollback with a single command if the update introduces problems.

What are stable service endpoints in Kubernetes?

Stable service endpoints are persistent IPs and DNS names provided by the Service API for dynamic backend Pods, ensuring that clients always have a consistent address even as the underlying containers are replaced or rescheduled.

Recommended

- Maximize DevOps agility and scale with containers

- ECS on AWS: Scale containers reliably in 2026

- AWS cloud operations tutorial: optimize and scale smart

- ECS in DevOps: The Key to Scalable, Cost-Effective AWS